Rudimentary Cryptology: Pretty PolyCiphers

TLDR: A walkthrough of historical cipher attacks and defences.

Table of Contents

Expanding Substitution

Okay so over the last couple of episodes in this series, we've laid down the ground rules for what cryptology is all about, and looked at more substitution ciphers than you probably knew existed. Of course we've done more than that, we've considered how and why they were used and looked at how they were built, why that was appropriate for their use case and a fairly basic look at where and why they are weak and how we can start to exploit that. Now we move into the realm of ciphers that were built in response to these approaches, the first real attempts to make an uncrackable code, and we take a much deeper dive into how to break them, buckle up crew cos the captain's getting seasick and his parrot's got us on a bearing to the rocky shoals of PolyCiphers.

Polyalphabetic Ciphers

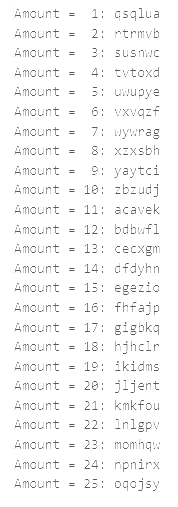

So what exactly is a polyalphabetic cipher and why is it different from a monoalphabetic cipher? Well let's differentiate the terms, substitution ciphers swap one thing for another, often a letter for a different one but it could be anything. A monoalphabetic cipher is a type of substitution cipher, "mono" means "one", so one alphabet substitution, think about Caesar, we just swapped one alphabet for another that was phase shifted. So a polyalphabetic cipher is a type of substitution cipher too, but as indicated by the word "poly" meaning "many" we use many at once, making it much more complex. Lets consider a simple example to parse this, take the word "moment" it's six letters long so why don't we cipher the first three letters by 3, and the last three letters by 6, this is tricker so lets write out our alphabets:

| Plaintext | a | b | c | d | e | f | g | h | i | j | k | l | m | n | o | p | q | r | s | t | u | v | w | x | y | z |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Ciphertext 3 | d | e | f | g | h | i | j | k | l | m | n | o | p | q | r | s | t | u | v | w | x | y | z | a | b | c |

| Ciphertext 6 | g | h | i | j | k | l | m | n | o | p | q | r | s | t | u | v | w | x | y | z | a | b | c | d | e | f |

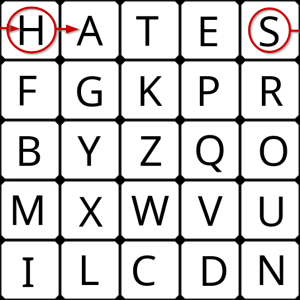

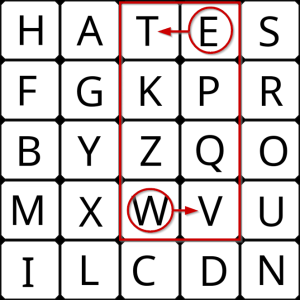

So if we encode based on this idea we end up with "prpktz" which seems little different to a standart Caesar, however as we saw in the last post Caesar is very vulnerable to brute forcing. So lets try that now:

Well look at that, if you know where to look both halves are visible, but because it isn't at the same time there is no clear shift pattern that breaks this simple polycipher, meaning we have made this significantly more secure than Caesar. This is the principle polyalphabetic ciphers are founded on, and its worth remembering that for hundreds of years these ciphers were sufficient, even by modern day standards while they have their weaknesses unless you have substantial knowledge of the system they can prove nigh on impossible to break. Just think back to Kerckhoffs and remember that their only real flaw is that they cannot be verified, as the verification process would render their encryption useless, and that is why these ciphers are not considered good enough, because they will always be vulnerable to ingenious individuals and the occasional stroke of luck, and such a thing is not acceptable and no basis for a cryptographic system. Just because we can't easily crack it, it doesn't mean a system is good.

Tabular Recta and Trithemius

The birth of the polyalphabetic cipher can be traced back to Arabia in the late middle ages, but it didn't take long for the ideas to make their way to Europe. I don't want to focus on people too much but an Italian polymath named Leon Battista Alberti and a German monk named Johannes Trithemius are generally credited as the founders of European cryptography. Alberti came up with an idea for a movable cipher disk built off a recently invented type of printing press, I just want to focus in on this for a moment as this idea is pivotal in cryptography and crops up again later. This disk looked a lot like the Caesar cipher disk we saw previously where you can turn the inner circle to select the correct shift, but the idea here is that you would keep moving the inner circle as you work.

Alberti proposed moving it by a pre-defined value \(k\) every three or four words, thus changing the cipher in play. As we have already seen, this simple method is quite effective, though using entire words would make it more visible to someone attempting to brute force it. Trithemius thought they could do better and proposed changing the shift by 1 after every letter, this was simple and easy to remember with no need for a key, but resulted in a vastly superior cipher. This is where the disk becomes interesting because now you have devised a typing mechanism that processes with every letter you type, if you set it to a shift of 0 and keep typing A then as the shift increments you will get ABCDEF etc. This means you are no longer typing with one cipher but up to 26, changing every letter, so any attempt to brute force will appear as random as any other output.

Now people didn't have access to this new device, nobody did because it was nothing more than an idea, but Trithemius realized this didn't matter. He simply extended the tables people would use to write a Caesar translation to every letter of the alphabet, and thus he built a tabula recta, an encoding table that could be used to translate the message using his newly designed polyalphabetic cipher, the Trithemius cipher, all you had to do was remember where you were in the table, so it was very user friendly. Unfortunately this was its greatest downfall, without a key an attacker already knew everything they needed to start deciphering it and its regular periodicity (incrementing 1 every letter) meant that as soon as you realised there was more than one alphabet in play it didn't last long.

Vigenère, Gronsfield and Beaufort

While polyalphabetic ciphers for some time were believed to be unbeatable Blaise de Vigenère recognised that simplicity often tended to be the weakness of ciphers. So he introduced a key into the Trithemius cipher to secure it better, using it to hop around the table in a more randomized manner. The result was what we now know as the Vigenère cipher and it gained great popularity for its supposed impregnability, only helped by its ease of use. The Vigenère cipher was able to achieve these seemingly increasingly mutually exclusive goals because the letter of the keyword indicated which shift to use, and then it was simply repeated. Lets give an example using the keyword "cake" and the message "We Shall Fight on the Beaches":

| Plaintext | weshallfightonthebeaches |

|---|---|

| Keyword | cakecakecakecakecakecake |

| Ciphertext | yeclclvjkgrxqndlgboeehow |

If you take a close look you will see "e" appears at positions 2, 17 and 19, and respectively turns into "e", "g" and "o", which is where the strength of polyalphabetic ciphers lies as any letter could potentially be any other depending on the circumstances it is translated in, it is not always the same. We can also see with "a" and "c" in beaches the reverse is also true as they both become "e", hang on a second, I warned against doing that previously in how we wouldn't know which letter to translate back to. We don't need to worry though as the translation is specific to the shift, so there is no doubt about which letter they need to be decrypted into as only one is right in the specific circumstances of that translation.

The Vigenère cipher was for a long time (until 1863 in fact) considered the bees knees in cryptography, in France it was often referred to as the indecipherable cipher, but this didn't mean nobody tried to come up with a better one. Two such attempts were made by Gronsfield and Beaufort (the inventor of the wind scale guide), their ciphers were heavily based on Vigenère, but their results weren't much to write home about. Gronsfield used a numerical key of 0-9 instead, using only 10 of the shifts and discarding the rest, reducing the keyspace as we've discussed before is generally a bad idea as it makes it easier to crack. Beaufort's idea was a little better, he wrote the tabula recta backwards adding a layer of obscurity to the result, this effectively performed what we call an inversion, from a security perspective it made almost no difference, from a practical standpoint it meant that the plaintext was subtracted from the key rather than added to it. Largely speaking these variants made no difference to the overall security of the cipher, which is why they are mostly forgotten.

Autokey and the Running Cipher

Vigenère did not rest on his laurels as just 14 years later he published a new, stronger cipher known as the autoclave or autokey cipher. Our modern brains tend to interpret "auto" to mean automatic or machine-like, and while that is certainly true here, the cipher likely being one of the reasons the meaning shifted over time, the name was actually an older meaning you will find in the word "autobiography", meaning "self". This development is all about the realisation of the flaw of key reuse, we will look at it on the larger scale later but for now what I want you to notice is how the Vigenère key just repeats over and over. This repetition is the cipher's greatest weakness and is only compounded by shorter keywords like the one I used, "cake" is only four letters, so it cycles quickly making it easy to spot, but it also only uses 4 alphabets to encode with. While they are chosen randomly this is excessively limiting meaning each letter can only be encoded to 4 option in the ciphertext, autokey goes some way to resolve this. Lets take a look at how it operates, its a lot easier to show than explain so I'll make an example, I'll use the same key and message as before, but this time use autokey:

| Plaintext | weshallfightonthebeaches |

|---|---|

| Keyword | cakeweshallfightonthebea |

| Ciphertext | yeclwpdmirsywtaasoxhgiis |

Okay so you can see it starts out the same as Vigenère, but everything changes once the key ends. Instead of repeating that key over and over generating a noticeable repeating pattern and limiting the alphabet use, it starts to use the plaintext - now out of sync - as part of the key. In terms of the problems we were looking to solve it has done a great job, it now doesn't repeat at all - excellent security move - and uses a much broader set of alphabets. On the downside it still isn't perfect, it doesn't use every alphabet so the keyspace is still being limited, though the longer the message the greater the chances it will be fully expanded. Another not so great thing is it does still repeat some of the alphabets it uses, while a lot harder to spot this is still a weakness, as what you really want is a completely random and unpredictable stream. Finally it includes the plaintext as part of the key, this is typically a bad move as it is supposed to remain secret and it gives an extra opportunity to extract it without needing to crack the cipher itself. By now you're starting to pick up a lot of the principles that make a cryptosystem, historic or modern, either good or bad, and are beginning to develop intuitions about what sort of mistakes you should look for to break them. In a couple of posts we'll start to formalize these conventions so we can build a toolkit for thinking about cryptology, and that's really what all the mathematics is actually for.

The running cipher was developed some 300 years later in 1892 and finally found a way to produce a "key stream" as suggested before, elevating this type of cipher to about the best it can be while still being usable to the average person, the source was not endless as it was based in the text of a book but it only needed to be as long as the message itself. Here's how it worked, the key would be some notable book that both parties could easily access, then the message was encrypted by using the text from that book either by starting at the beginning, or by giving a specific starting point. The text had spaces, special characters and numbers removed and the letters then told you which column of the tabula recta to use, much how Vigenère did. Words of course aren't completely random in nature, which is a weakness of this algorithm, but with a sufficiently long stream with no clear pattern it was pretty good, it is a fair bet that even today you would be hard pushed to crack this cipher, it actually becomes easier to try and guess which book has been used. Here is an example using a book that is for me quite close to a bible The C Programming Language by Brian Kernighan and Dennis Ritchie, 2nd edition.

| Plaintext | neverinthefieldofhumanconflictwassomuchowedbysomanytosofew |

|---|---|

| Keyword | cisageneralpurposeprogramminglanguageithasbeencloselyassoc |

| Ciphertext | pmnexmaxyeqxycscxljdottozrtviewnymosykavwwefcfqxofcemsgxsy |

Here you can clearly see how I've encrypted another of Churchill's famous phrases using a stream of text from the start of the book. A simple and surprisingly effective cipher that would serve the purpose of our everyday secret communications, but even this we must remember is not bulletproof, and for truly secure communications we should accept nothing less.

Frequency Analysis

We've talked about frequency analysis a fair bit so far, perhaps not directly, but its always been there hanging like a shadow over our ciphers. Monoalphabetic ciphers as we previously discussed have such a small keyspace that we can typically brute force them, simply trying every key until we find the right one. Polyalphabetic ciphers however make this difficult to achieve and generally impractical at best if not impossible as there is no easily identifiable indicators that you have the correct one and it only applies to that one letter. Language however has an inherent "flaw" built in, its not really a flaw of the language as language is built for communication not secrecy, but it is a flaw of using something not designed for secrecy to keep secrets. The "flaw" is that characters or letters are not used evenly or distributed randomly, there is a pattern to them, and we can abuse this pattern to undermine the ciphers. Here is an example of a frequency analyzer from my cryptolocker:

#!/usr/bin/env python3

# freana.py - A simple script to analyse letter frequency in a text file.

# Usage: freana.py -f <file> -s <skip>

def count_letters(text):

from collections import Counter

freq = Counter(char for char in text.upper() if char.isalpha())

length = sum(freq.values())

print('Letter frequency:')

for k, v in freq.most_common():

print(f"{k}: {(v/length)*100:.2f}")

return

def skip_letters(input, skip):

for i in range(skip):

text = input[i::skip]

count_letters(text)

return

if __name__ == '__main__':

import argparse

parser = argparse.ArgumentParser(description='A simple script to analyse letter frequency in text.')

parser.add_argument('-f', '--file', help='The file to analyse.', required=True, type=argparse.FileType('r'))

parser.add_argument('-s', '--skip', help='The skip value for the text.', default=1, type=int)

args = parser.parse_args()

file, skip = args.file, args.skip

input = file.read().strip()

skip_letters(input, skip)

print('Done.')This shockingly short bit of code will analyze a piece of text for you and tell you how often a letter comes up. How is that useful? Well used on a sufficiently large piece of English text it should tell you that the characters from most frequent to least are roughly E (12.02%), T (9.10%), A (8.12%), O (7.68%), I (7.31%), N (6.95%), S (6.28%), R (6.02%), H (5.92%), D (4.32%), L (3.98%), U (2.88%), C (2.71%), M (2.61%), F (2.30%), Y (2.11%), W (2.09%), G (2.03%), P (1.82%), B (1.49%), V (1.11%), K (0.69%), X (0.17%), Q (0.11%), J (0.10%), Z (0.07%). This is like the fingerprint of the English language, it can vary but pick any English text of around 40,000 words or more and you should find it comes pretty close. Every language has their own fingerprint but they are mostly fairly easy to look up if you need them. Okay great so we can detect languages, what use is that? Well if you have a monoalphabetic cipher of a text and you run a frequency analyser, you will find the same percentages and order, but the letters are all mixed up, in fact it should come fairly close to telling you exactly which letters have been switched for which as if T has 1.11% it is most likely V. The best way to go about this is start at the top with E and work down swapping the letters back until you can easily tell what the message says.

This is especially useful on something like the plugboard we talked about, the letters being scrambled means it would require us to look every permutation of letter ordering there is, this grows larger the more items there are. Given we have 26 items, the number of keys would be \(26!\) which is about \(4 \times 10^26\) which is a really big number for us, although in keyspace sizes its actually not huge as a machine as previously discussed can perform 4 billion operations per second. So trying all keys wouldn't take that long for a computer, but for us as a person it is not really feasible to brute force, but we can easily count letters and use the frequency to tell us which letters they actually are relatively easily. Polyalphabetic ciphers however are more problematic, because they don't always encode to the same letter for the same input, not only does this scramble the attempts at brute forcing it also affects the letter frequency. Think about it, using our Vigenère cipher of "cake" an E can be encoded to one of four letters, G, E, O, or I, so rather than the 12% all landing on G, it will be on average split between the four of them, so around 3%. This means the fingerprint is a lot more scrambled too, there are often no standout frequencies as it all becomes averaged out creating a sort of generic noise, and the longer the key, the more alphabets are used, and the more averaged the frequencies. This isn't the end for our frequency analyzer though.

These polyalphabetic ciphers have been able to hide their frequency in the noise by smearing out their peaks in the law of averages, it is still there though hidden in the signal. We can draw it back out again by adjusting our focus and applying a filter, the reason for this is that the averages relies on different alphabets being used, but there are only 26 to choose from. As such it stands to reason that the longer a message is the greater a chance of there being more repeated alphabets, if we can predict when these repeats occur we can filter for them and analyze the message as if it were monoalphabetic. If that didn't make much sense, think of it this way, imagine I used a Vigenère cipher with a key of just "c", this means that due to repeats when the key ends the translation alphabet is "ccccccccc" etc., effectively a monoalphabetic cipher of shift 2. Now think about my earlier key of "cake" if I as an attacker successfully guess the key is 4 letters long and only look at the first letter of every group of four I would get "c---c---c---c---c---" and so on, effectively a monoalphabetic cipher. I can the look at the second letter of every group giving me a monoalphabetic cipher with a shift of 0 (which is actually no change). I can follow the same process for each letter until I know the alphabet and thus know both the key and the plaintext.

This means all you need to do is use a frequency analyzer dropping letters until the frequencies magically line up with the fingerprint, this will tell you how long the keyword is, then it is simply a question of cracking that many Caesar ciphers to get the keyword. So you can see even at this powerful level of cipher a frequency analyzer can crack open the cryptosystem, the only ones really immune to this are the autokey and running ciphers because they don't really repeat. However the first uses the plaintext which makes it more vulnerable, potentially unlocking most of the message, and the other is simply a question of finding the right book, so none of them are truly secure.

Polygraphic Ciphers

You've probably never heard of polygraphic ciphers, or seen one used, don't worry, neither had I before starting this series. Its important to understand how niche this is, most people know nothing of computational security, of those that do few are familiar with cryptography, of those that are most aren't really well versed on historical ciphers, and of those that are almost none of them have encountered polygraphic ciphers. Why is this? They must have been around a while or they wouldn't be mentioned here, and that's true, but they are unusual in that while the idea of them has floated around since 1563 (contemporary to Vigenère) when Giambattista della Porta came up with the idea of bigraphic substitution, the difficulties in generating them meant that the first one wasn't developed until 1854, almost 300 years later. This massive gap is partly to blame as the heyday of historical ciphers was pretty much over by the time they arrived, this can be seen in how the running cipher isn't anywhere near as popular as autokey, despite being better. The other major reason is how awkward it is to use, it did solve a lot of issues from earlier ciphers, and was used by some, even the military for a time, but as soon as better options came along polygraphic ciphers fell into disuse.

So, how do they work? I mean surely at this point to fix the remaining flaws in the language we have to step away from the concept of exchanging one letter for another thus destroying any chance at detecting frequencies right? Exactly right actually, instead of looking at how to pick a random letter to replace it with, polygraphic ciphers look at multiple letters, also know as "digraphs", "di-" means two, and "-graph" meaning loosely symbols or drawings. Obviously trigraphs, quadgraphs, or any kind of polygraph could be used but as you'll see shortly two is difficult enough so it is unusual to move beyond digraphs. The idea is simple, you take two letters at a time and translate them into a single symbol, thus destroying the individual character frequency as it won't always be paired in the same way and the law of averages as we saw previously smoothes them out. The key here is as the averaging is right inside the encoding it can no longer be reversed, and into the bargain you also change the length of the message adding further security. Its a great idea, but firstly it assumes there is no frequency fingerprint to digraphs, which isn't true, there is, its just less pronounced, but more importantly it has one really big problem, the symbols.

Think about it, up until now we've only needed 26, one for each letter of the alphabet, but if you are no longer encoding letters individually, you now need a symbol for every single case that arrives. To have a symbol for every pair of characters you need \(26^2 = 26 \times 26 = 676\) if you aren't sure of the maths, thats just all 26 possibilities for the first character multiplied by all 26 possibilities for the second character. 676 symbols is a lot, especially for making characters that you can understand at a glance and easily tell apart, for reference Asian languages that are some of the most symbolically complex we have use only about 50 distinct characters, so you run into a real problem in developing the tools you need to do the job. Another way you could approach it is using Phonics, if you've never heard of this ask a primary school teacher, but its about mapping the sounds of our language, the reason this could work is many of them are digraphs, but not all, a large number are still single letters, thus significantly reducing the number of symbols you would need. There are around 44 sounds in the English language and some of them have 5 or 6 ways they can be spelled, still substantially less than 676. The problem with this method is twofold, firstly knowing and understanding the phonics would effectively require everyone using the cipher to have a degree in English language, not ideal, and the second reason is that phonics are just about as fingerprintable as the letters themselves if not moreso, undermining the entire point. So its a difficult problem to solve, but in 1854 Charles Wheatstone did, when he developed the Playfair cipher.

Playfair

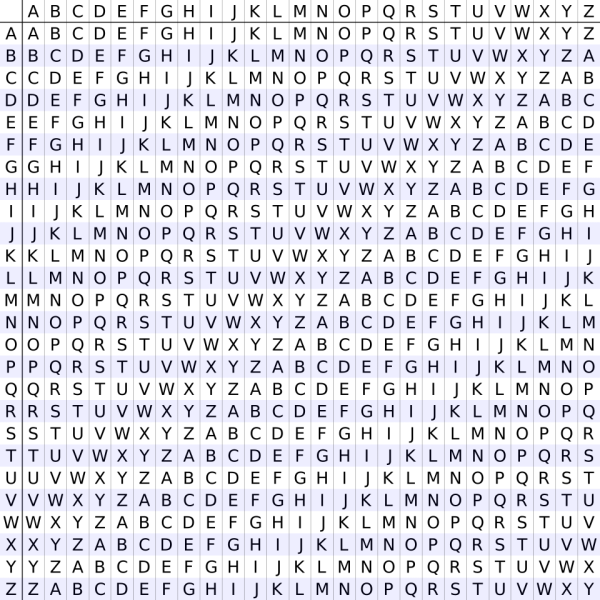

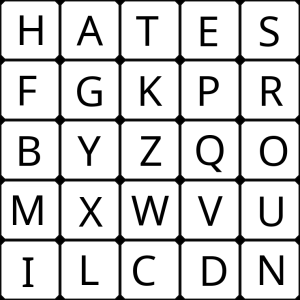

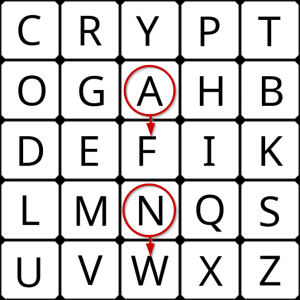

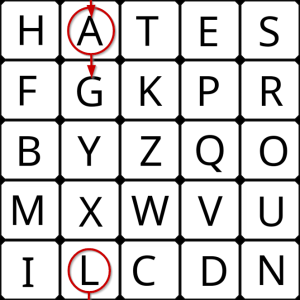

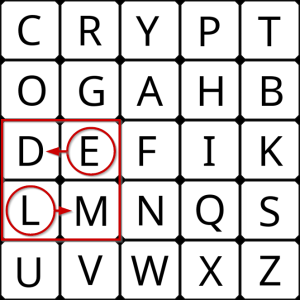

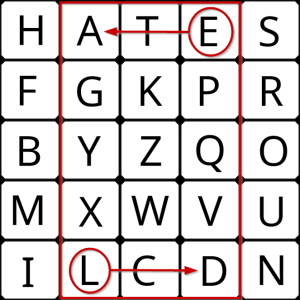

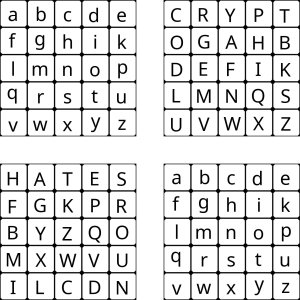

So how did Wheatstone solve what nobody else in 300 years could? Well, the first thing he did was recognise that fancy symbols would make his cipher stand out, so instead of a single symbol he simply used another pair of letters as a digraph, so obvious it seems silly. The second thing he did was recognise to retain the encryption of a digraph rather than individual letters, he would need to use pairwise encryption, or to put it another way, he would need to encrypt them together where each part affects how the other is encrypted, this way they are effectively entangled. So how do you do that? Its probably easiest if I show you, first you start with a keyword or phrase to ensure the secrecy of the algorithm, I'll try two for a couple of examples, first "Cryptography" is a good one, and then for comparison I'll use the three best words to start wordle with "Hates round climb" but you can chose anything. These words are then used to build a 5 by 5 grid, this can be done in a few ways, you could simply write them left to right, or you make a spiral starting on the outside working its way to the middle. As we do this we will run into a few issues, firstly we should skip any repeated letters, secondly once we reach the end of the keyword we go through the alphabet from a to z including in order any letters not used so far, thirdly 26 letters is 1 more than you can fit into a 5 by 5 grid so we get around this by skipping J or Q, the most common way is to skip J and consider it to have the same position as I.

Hopefully you should be able to see how I was able to generate these two grids using the two keys with left to right for the first and spiral pattern for the second, as well as get a rough idea of how we can engineer these tables to get a distribution we like. Now we need to establish the rules for how to encode the message using these grids, but lets start with a message and I'll show you the rules in action step by step. The message we shall use will be this rather famous quote "we shall fight on the beaches, we shall fight on the landing grounds, we shall fight in the fields and in the streets", lets prepare it for digraphic encryption by removing the spaces and splitting into digraph pairs "we sh al lf ig ht on th eb ea ch es we sh al lf ig ht on th el an di ng gr ou nd sw es ha ll fi gh ti nt he fi el ds an di nt he st re et s". Now we can move onto the rules.

- If both letters are the same (or only one letter is left), add an "X" after the first letter. Encrypt the new pair and continue. Some variants of Playfair use "Q" instead of "X", but any letter, itself uncommon as a repeated pair, will do.

We have one double LL and one single letter S, this means LL -> LX and S -> SX, then we encrypt them as we normally would following the remaining rules.

- If the letters appear on the same row of your table, replace them with the letters to their immediate right respectively (wrapping around to the left side of the row if a letter in the original pair was on the right side of the row).

For DI in the first grid this applies, and for SH in the second grid this applies, so they are encoded as follows:

DI -> EK and SH -> HA

- If the letters appear on the same column of your table, replace them with the letters immediately below respectively (wrapping around to the top side of the column if a letter in the original pair was on the bottom side of the column).

For AN in the first grid this applies, and for AL in the second grid this applies, so they are encoded as follows:

AN -> FW and AL -> GA

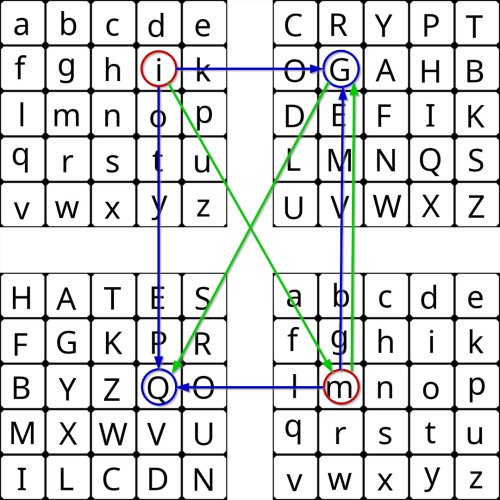

- If the letters are not on the same row or column, replace them with the letters on the same row respectively but at the other pair of corners of the rectangle defined by the original pair. The order is important – the first letter of the encrypted pair is the one that lies on the same row as the first letter of the plaintext pair.

This applies to most cases, so I will show WE and then EL in both tables to indicate how they are encoded:

WE -> VF and WE -> VT

EL -> DM and EL -> AD

This should provide all the information you need to know to be able to understand the cipher and correctly build the ciphertext below. To decrypt, use the inverse (opposite) of the two shift rules, selecting the letter to the left or upwards as appropriate. The last rule remains unchanged, as the transformation switches the selected letters of the rectangle to the opposite diagonal, and a repeat of the transformation returns the selection to its original state. The first rule can only be reversed by dropping or replacing any extra instances of the chosen insert letter - generally "X"s or "Q"s - that do not make sense in the final message when finished. Give it a try on these ciphertexts and you will see they return to the original message.

| Plaintext | we | sh | al | lf | ig | ht | on | th | eb | ea | ch | es | we | sh | al | lf | ig | ht | on | th | el | an | di | ng | gr | ou | nd | sw | es | ha | ll | fi | gh | ti | nt | he | fi | el | ds | an | di | nt | he | st | re | et | s |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Ciphertext 1 | vf | qb | on | nd | eh | bp | al | pb | kg | fg | po | km | vf | qb | on | nd | eh | bp | al | pb | dm | fw | ek | ma | eg | al | lf | nz | km | bh | qu | ik | ab | pk | sy | gi | ik | dm | kl | fw | ek | sy | gi | zb | gm | kr | qz |

| Ciphertext 2 | vt | ha | ga | ig | lf | ae | us | ea | hq | st | it | sh | vt | ha | ga | ig | lf | ae | us | ea | ad | sl | nl | lr | kf | un | in | tu | sh | at | al | bh | fa | hc | cs | as | bh | ad | ne | sl | nl | cs | as | he | ps | se | au |

So, how does it fair as a cipher? Well pretty strong, obviously it will never come close to modern cryptographic methods, and with enough data none of these historic ones are that secure, but it will hold up very well against a lot of different attacks. It is of course not without weaknesses, you may have spotted a few, firstly as already mentioned digraphs do have a frequency fingerprint to them, so if you figure out that's what it is you can begin unravelling it, though it isn't as easy as previous ciphers. Next up you may have noticed that the same pairs of letters still get encoded the same way, so it lacks some of the random nature of later polyalphabetic ciphers, though about 50% of the time the offset will be different meaning you get a different pair, so there is some averaging going on. One telltale sign in this cipher as you might have noticed is if you flip a pair around the ciphertext it produces is the same as flipping the original ciphertext, this might seem small but it is a valuable tool, particularly for detecting words which naturally have this behaviour, like "render", these words aren't that common and so can provide insights into the plaintext. On the whole this cipher is used so rarely its not all that important to know how to break it specifically, if that interests you Wikipedia seems to be the best source right now.

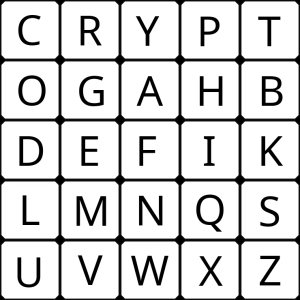

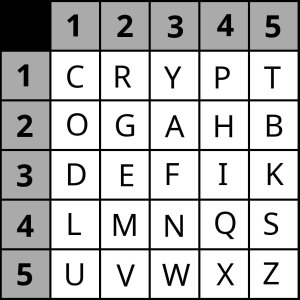

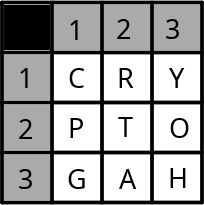

Bifid and Four-Square

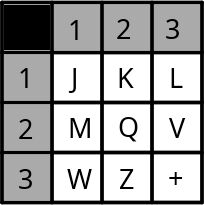

Bifid and Four-Square ciphers were both designed in 1901 by French cryptographer Felix Delastelle, they were built off similar concepts to Playfair and simply used different methodology to obtain a cipher. As such they didn't really offer any enhancements, so lets look at how they worked, we'll start with the Bifid cipher. You start off by building a Polybius square, that's the square we already used in Playfair so we already know how to make them, this is actually an encryption device that dates back to the ancient Greeks, when you would use a simple Caesar shift within the box, but it really found its stride with the polygraphics. The difference here is we also need to number the columns and rows.

Now we need a message to send so lets go with this one "God does not play dice." as usual we'll remove spaces and symbols, and I'll use lowercase as I find it less aggressive to look at. Then we need to encode the message by using the numbers as a coordinate system.

| Plaintext | g | o | d | d | o | e | s | n | o | t | p | l | a | y | d | i | c | e |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Row | 2 | 2 | 3 | 3 | 2 | 3 | 4 | 4 | 2 | 1 | 1 | 4 | 2 | 1 | 3 | 3 | 1 | 3 |

| Column | 2 | 1 | 1 | 1 | 1 | 2 | 5 | 3 | 1 | 5 | 4 | 1 | 3 | 3 | 1 | 4 | 1 | 2 |

Now we ignore the pairings and read it out as rows, left to right like we would a book: 223323442114213313211112531541331412

Then pair them off, so each pair contains elements of two letters, entangling them much like the Playfair approach did: 22 33 23 44 21 14 21 33 13 21 11 12 53 15 41 33 14 12

And we take these new pairs and use them to select the new characters using the original table to generate our ciphertext: GFAQOPOFYOCRWTLFPR

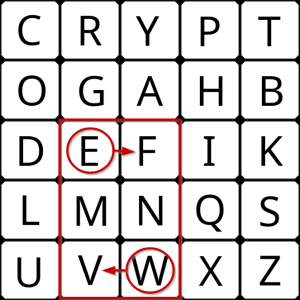

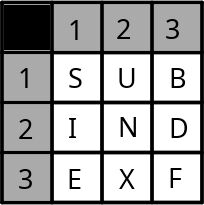

Decrypting is simply a question of reversing the process, build the Polybius square, get the coordinates for each letter row then column all in a long line. Find the midpoint of that line, split on the midpoint and line them up to produce the original coordinates, then use them to decode the message. That's all there is to it. The Four-Square cipher as the name suggests uses four squares, but you will see its still quite similar to the others. To do this one we will need 4 squares of letters, but its not so bad, two of them are just plaintext lowercase so a-z, remembering of course to exclude either j or q, I prefer j as it can share the i space. The other two are Polybius, so it should look like this:

Once you've got your squares laid out, you need your message, we'll use "Imagination is more important than knowledge." and much like Playfair we pair up the letters, removing any symbols or letters, standardizing the case and completing any unpaired with a placeholder. Which gives us this: im ag in at io ni sm or ei mp or ta nt th an kn ow le dg ex

Now with each pair we:

- Find the first letter in the digraph in the upper-left plaintext matrix.

- Find the second letter in the digraph in the lower-right plaintext matrix.

- The first letter of the encrypted digraph is in the same row as the first plaintext letter and the same column as the second plaintext letter. It is therefore in the upper-right ciphertext matrix.

- The second letter of the encrypted digraph is in the same row as the second plaintext letter and the same column as the first plaintext letter. It is therefore in the lower-left ciphertext matrix.

Which looks a bit like this, you can use the green arrows to follow the order to ensure you get the characters the right way around.

This will give you the ciphertext: GQ RF AQ PM HQ IK MZ EV LE IW NP YB AO ED KH RP YN

To decrypt all you have to do is the reverse, so build the four squares, put the ciphertext pair into upper and lower ciphertext squares respectively, then read off the values in the plaintext squares. As you can see while subtley different Four Square is very similar to Bifid in how it just muddles up and intertwines the locations producing a digraphic ciphertext pair. These both do have one small advantage over Playfair, in that they don't suffer from the reversing letters problem, so it does make them a little harder to detect and crack, though only marginally.

Trifid

The Trifid cipher does something entirely different, it doesn't use digraphs, it does something incredibly weird and instead uses trigrams (three letters). How on earth does it pull that off? Well, can you honestly tell me you knew how to perform a digraphic polycipher before reading this? I doubt it. There's a way it just requires the right approach, and the first thing to note about this cipher is it was written by Felix Delastelle in 1902, just one year later. Why does that matter? Because a single person tends to think one particular way, and you can see the patterns in their work, so it should come as no surprise that the Trifid cipher is like an extension of the Bifid cipher, and it uses the self-same coordinate based system. Where it does differ however is it uses 3 tables instead of 1, lets take a look.

So with three tables we can number them 1, 2 and 3, and then identify any of the 27 letters contained within using a three digit mapping, for example A is found at 132. If we record these values for the plaintext vertically, then we can horizontally read the ciphertext trigraphs in much the same way as we did for Bifid. Lets try it out using the message "The Day of the Triffids"

| Message | T | H | E | D | A | Y | O | F | T | H | E | T | R | I | F | F | I | D | S |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Table | 1 | 1 | 2 | 2 | 1 | 1 | 1 | 2 | 1 | 1 | 2 | 1 | 1 | 2 | 2 | 2 | 2 | 2 | 2 |

| Row | 2 | 3 | 3 | 2 | 3 | 1 | 2 | 3 | 2 | 3 | 3 | 2 | 1 | 2 | 3 | 3 | 2 | 2 | 1 |

| Column | 2 | 3 | 1 | 3 | 2 | 3 | 3 | 3 | 2 | 3 | 1 | 2 | 2 | 1 | 3 | 3 | 1 | 3 | 1 |

| Ciphertext | R | S | P | P | T | N | D | V | O | F | U | Z | U | L | F | V | T | H | G |

And that's all there really is to it, decrypting is simply a question of reversing the process. Trigrams of course are even more tricky to analyze that Bigrams, but not impossible, as usual there is a natural frequency that can be detected, to detect it though you do require significant amounts of text, which is why these polygraphic ciphers remained in use right up until the appearance of modern cryptography.

Hill

First off let me apologise, we've done a great job of dodging maths so far, and hopefully that has developed some great intuitions about cryptology, however the time where we must start to embrace the mathematics is rapidly approaching. The Hill cipher was devised by Lester S. Hill in 1929, and he was first and foremost a renowned mathematician. Unfortunately that means that while his cipher is good in a number of ways thanks to his mathematical skills, it also means you need a good grasp of mathematics topics to understand it, most notably matrices. It took a while before this sort of approach really took off, but you'll see that gradually more and more these ciphers begin to head that way, so if maths is not your strong suit try to follow along as best you can and you may feel the need to learn specific mathematical areas to make sense of it all. I'll try to demystify it as much as possible for you, and I still stand by my belief that you don't need good maths skills to see the flaws in cryptographic systems, you just need a hacker's mind, something that most mathematicians don't have, and is why cryptologists often make glaring mistakes in their algorithms.

Alright, so lets start with something simple, lets number our letters, no fancy tricks needed this is just a tool to help us. I'll start from A at zero and work my way up, nice and simple.

| Letter | A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Number | 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | 20 | 21 | 22 | 23 | 24 | 25 |

Easy peasy, now I've listed the Hill cipher under Polygraphic ciphers, so that should tell us something about how it works. All the other Polygraphics so for have included a grid of letters, and the Hill cipher is no different, what is different is how it uses them, so lets look at the first of the three Trifid grids from the context of the Hill cipher. If you compare it with our number pairings we could write this grid as a matrix like this:

\[ \begin{pmatrix} 2 & 17 & 24\\ 15 & 19 & 14\\ 6 & 0 & 7 \end{pmatrix} mod 26\]

What Hill realized is that unlike his peers who used "simple tricks" to blend letters into digraphs, when you look at it this way you can use matrix transformations which is a much more mathematically pure way of achieving what they are after. As it happens those "simple tricks" were actually doing a lot of confusion and diffusion which are something we will talk about later, Hill was clearly quite aware of these concepts as his methods sought to maximize them. The end result is actually a cipher that is marginally weaker, but theoretically more extensible, it just becomes more and more impractical the more you require it to do, so it never really took off. As an indiction of just how impractical, I tried to use some of our existing grids just to see if I could make a functional example, but the matrices involve quickly become very complex and gross, so for simplicity I've stolen one from Wikipedia. So lets take a closer look at how this operates, we take our message, lets pick a nice short one and use "help". Our enciphering matrix is a 2x2 (see below) so this Hill cipher is a digraph and we split our message into 2 character pieces, this gives us a 2x1 matrix, its important for maths reasons that the last number of the first matrix size and the first number of the second matrix size match for matrix multiplication, it just ensures the output matrix is the right size and shape and that the multiplication is doable.

\[ K = \begin{pmatrix} D & D\\ C & E \end{pmatrix} \rightarrow \begin{pmatrix} 3 & 3\\ 2 & 5 \end{pmatrix}\\ HELP \rightarrow \begin{pmatrix} H\\ E \end{pmatrix}, \begin{pmatrix} L\\ P \end{pmatrix} \rightarrow \begin{pmatrix} 7\\ 4 \end{pmatrix}, \begin{pmatrix} 11\\ 15 \end{pmatrix}\\ \begin{pmatrix} 3 & 3\\ 2 & 5 \end{pmatrix} \begin{pmatrix} 7\\ 4 \end{pmatrix} \equiv \begin{pmatrix} 3 \times 7 + 3 \times 4\\ 2 \times 7 + 5 \times 4 \end{pmatrix} \equiv \begin{pmatrix} 21 + 12\\ 14 + 20 \end{pmatrix} \equiv \begin{pmatrix} 33\\ 34 \end{pmatrix} \equiv \begin{pmatrix} 7\\ 8 \end{pmatrix} mod 26\\ \begin{pmatrix} 3 & 3\\ 2 & 5 \end{pmatrix} \begin{pmatrix} 11\\ 15 \end{pmatrix} \equiv \begin{pmatrix} 3 \times 11 + 3 \times 15\\ 2 \times 11 + 5 \times 15 \end{pmatrix} \equiv \begin{pmatrix} 33 + 45\\ 22 + 75 \end{pmatrix} \equiv \begin{pmatrix} 78\\ 97 \end{pmatrix} \equiv \begin{pmatrix} 0\\ 19 \end{pmatrix} mod 26\\ \begin{pmatrix} 7\\ 8 \end{pmatrix}, \begin{pmatrix} 0\\ 19 \end{pmatrix} \rightarrow \begin{pmatrix} H\\ I \end{pmatrix}, \begin{pmatrix} A\\ T \end{pmatrix} \rightarrow HIAT\]

Okay so let me talk you through all that, first up we make a 2x2 matrix for our key (K), it could be any size really it just needs to be a matrix filled with letters, or you can even skip straight to the numbers so long as they're between 0 and 25. Then we convert those letters into the numbers using our grid above, there are some additional considerations for the key but we'll look at why that is in a bit. Once you have the key sorted, as discussed above you need to look at the last number in its size 2x2 and that tells you what number of letters to divide your message up into, so here its a two meaning it uses digraphs. So then we split up our message of HELP to make two 2x1 matrices, meaning it can multiply by the key, a note here is that actually you could make one 2x2 matrix from the entire message as it works the same I've simply split them up to understand the process. So with the message "boysenberries" you could make a 2x7 matrix and pad the end with an 'x' or something, matrix multiplications in this way work exactly the same, so long as you fill the columns first, then rows, meaning it would look like this:

\[ \begin{pmatrix} B & Y & E & B & R & I & S\\ O & S & N & E & R & E & X \end{pmatrix}\]

Or if we were to prepare it for our earlier 3x3 key it would look like this:

\[ \begin{pmatrix} B & S & B & R & E\\ O & E & E & R & S\\ Y & N & R & I & X \end{pmatrix}\]

Hopefully you can see matrices while a bit scary looking aren't that complicated, they just hold a lot of data that is often tricky to comprehend. Think of them like this, you will almost certainly have seen Cartesian coordinates, they tend to look like this (x, y), they are like a very basic 1x2 matrix, usually you flip them into a 2x1 if using a matrix but that's not important, the coordinates tell you where a specific point is on a 2D plane e.g. on a piece of paper. Its a really simple concept, now imagine you need a line rather than a point, well you could add a second set of coordinates giving you a 2x2 matrix and you know that line is just joining those two points, in fact usually its a direction, so you put a little arrowhead on the line to say "the object moved from here to there in this direction". Here's where people often struggle to visualise, just take it slow and don't worry about it too much, beyond 3D even great mathematicians struggle, we don't live in a 2D world, so to mark a point in space for us you add a z coordinate for each so we now have a 3x2 matrix. That's just how it goes, you can just keep adding points and dimensions, and we could talk about things like tensors but we don't really need to, the main takeaway is to have a vague concept that its just dimensionality and that we don't need the numbers to have a specific meaning, they are just a simple way to hold complex data we need to work with, and that's all that matters.

Alright so back to describing the process, once we've broken up our message into convenient chunks, then all we do is encode it, and we do this with matrix multiplication. Matrix multiplication is a little weird in how its done, but you can trust me in that the maths works out even though it seems like arbitrarily sticking numbers together. The way to think about this is imagine our message is a shape within our graph, matrix multiplication is a way to transform and distort that shape, so maybe we have a square and I stretch it out into a rectangle and then push it over so it becomes a rhombus, or reflect it in a mirror or rotate it, these are all transformations you can do with a matrix, and if you follow the rules of matrix multiplication that is how it does so correctly. In this case we are talking about letters, or rather digraphs, but the main point is the same, it comes out the other side looking nothing like it did before and without knowledge of what was done to it i.e. the key, reversing it is not an easy task. Here is a guide on how to do it that you can trust, and I'll just show you an example.

\[ \begin{pmatrix} a & b\\ c & d \end{pmatrix} \begin{pmatrix} e & f\\ g & h \end{pmatrix} \equiv \begin{pmatrix} a \times e + b \times g & a \times f + b \times h\\ c \times e + d \times g & c \times f + d \times h \end{pmatrix}\]

So you should be able to confirm my earlier calculations, then once that is done all you have to do is convert the new values back into letters and you will have the ciphertext, in our case HIAT. Decryption requires us to finally talk about the topic I've been avoiding, inverses. Okay so if you've done fractions, you've probably looked at inverses before \(\frac{3}{4}\) is the reciprocal or inverse of \(\frac{4}{3}\), in fractions all you really do is flip it and you can extend that into exponents, \(4^{-2} = \frac{1}{4^2}\) is the inverse of \(4^2 = \frac{4^2}{1}\). The key defining feature is if you multiply them together they cancel each other out \(\frac{3}{4} \times \frac{4}{3} = 1\), this is a powerful feature in cryptography, because any number times by 1 is itself, so you can use inverses to dissolve cryptographic encoding away to nothing, this is the basis of asymmetric cryptography. Symmetric systems have just one key, you use it both to encrypt and to remove that encryption as it is easily reversible, asymmetric systems have two keys, one to encrypt, one to decrypt, doing it this way brings in a load of advantages that we will talk about in a future post, but the important point here is more often than not asymmetric systems are based around this multiplicative inverse dissolving the encryption, and that's what the Hill cipher does.

\[ \begin{pmatrix} 1 & 0 & 0\\ 0 & 1 & 0\\ 0 & 0 & 1 \end{pmatrix}\]

So how do you inverse a matrix? Unfortunately, much like matrices themselves the answer is a little complicated, but it starts with an identity matrix, like the one above, identity matrixes are easy to spot, as they are always square, so 1x1, 2x2, 3x3, 4x4, and they always have a diagonal of 1s running from left to right from top to bottom, and everything else is 0. An identity matrix is special because its a bit like the number 1, if you multiply a matrix by its identity matrix, you just get the original matrix back, but it can also be used to find the inverse of a matrix via a number of techniques. These techniques are beyond scope, weird, kind of tricky and honestly pretty gross to use, but also sort of necessary if you work with matrices, so here is a guide on how to do it. To ensure you can decrypt, when you make your key you need to ensure your key matrix has an inverse, and then calculate what it is, that is your decryption key, then you can multiply your ciphertext by this and watch as the magic of maths dissolves the encryption and reveals your message. Lets decrypt our example using our inverse decryption key.

\[ K^{-1} = 9^{-1} \begin{pmatrix} 5 & 23\\ 24 & 3 \end{pmatrix} \equiv 3 \begin{pmatrix} 5 & 23\\ 24 & 3 \end{pmatrix} \equiv \begin{pmatrix} 15 & 17\\ 20 & 9 \end{pmatrix}\\ HIAT \rightarrow \begin{pmatrix} H\\ I \end{pmatrix}, \begin{pmatrix} A\\ T \end{pmatrix} \rightarrow \begin{pmatrix} 7\\ 8 \end{pmatrix}, \begin{pmatrix} 0\\ 19 \end{pmatrix}\\ \begin{pmatrix} 15 & 17\\ 20 & 9 \end{pmatrix} \begin{pmatrix} 7\\ 8 \end{pmatrix} \equiv \begin{pmatrix} 15 \times 7 + 17 \times 8\\ 20 \times 7 + 9 \times 8 \end{pmatrix} \equiv \begin{pmatrix} 105 + 136\\ 140 + 72 \end{pmatrix} \equiv \begin{pmatrix} 241\\ 212 \end{pmatrix} \equiv \begin{pmatrix} 7\\ 4 \end{pmatrix} mod 26\\ \begin{pmatrix} 15 & 17\\ 20 & 9 \end{pmatrix} \begin{pmatrix} 0\\ 19 \end{pmatrix} \equiv \begin{pmatrix} 15 \times 0 + 17 \times 19\\ 20 \times 0 + 9 \times 19 \end{pmatrix} \equiv \begin{pmatrix} 0 + 323\\ 0 + 171 \end{pmatrix} \equiv \begin{pmatrix} 323\\ 171 \end{pmatrix} \equiv \begin{pmatrix} 11\\ 15 \end{pmatrix} mod 26\\ \begin{pmatrix} 7\\ 4 \end{pmatrix}, \begin{pmatrix} 11\\ 15 \end{pmatrix} \rightarrow \begin{pmatrix} H\\ E \end{pmatrix}, \begin{pmatrix} L\\ P \end{pmatrix} \rightarrow HELP\]

There you have it, now if you have understood very little of this section on the Hill cipher due to the complex maths that's perfectly fine, matrices and modular mathematics are both very weird and somewhat central to cryptography which is what makes it such a tricky subject to learn. To you it doesn't matter though, I still stand by my assertion that you can do a lot of good in cryptography without understanding any of the maths, what matters is the principles. I'm sure you're wondering how can the maths not matter? The reason why is this, 90% of all cryptographic flaws are not in the maths, they are in the implementation details. Cryptographers exist to theorise and develop cryptographic algorithms, they don't even attempt to codify anything until they feel they have a suitably strong mathematical proof, and they are smart, generally speaking the maths itself will never break, but they are not familiar with the tools of how these systems are built and operate, that is where the problems arise. Mathematicians generally need little help in advancing to become cryptographers, the paths are fairly straightforward, this series is not for them, it is for the software and systems engineers that will build, implement and operate these algorithms, and to do that it mostly boils down to trusting the maths and developing a secure solution. This series is here to teach the intuitions and knowledge to spot and test the obvious oversights in algorithms, the secrets passed in plaintext, the leaked keys, the timing flaws and all the general ways you might abuse such a system. This is not a cryptographers job, and that so far is the biggest problem in cryptography, there aren't a lot of expert engineers solving these problems, like many of you they fear the maths, and as a result it is why cryptography fails. To build a world where we hope to protect individual privacy we need to stop worrying about the maths, and start doing what we do best, the unexpected.

Unbreakable?

So, if we have such complex ciphers and they can be so tricky to break, why are they treated like a mild curiosity and what do we need modern cryptography for? Well as we have discussed before with Kerckhoffs, these old ciphers were just sort of strung together haphazardly, and that leaves them full of little weaknesses, modern cryptography is built on a simply unassailable foundation of mathematics, when the implementation is perfected it doesn't matter what approach you try, it will fail, ciphers could never hope to get close to that. We have however seen how these ciphers can be pretty formidable themselves, but they are not unbreakable. We have seen simple shifts crumble to brute force attempts, monoalphabetic and polyalphabetic ciphers alike shattered by use of frequency analysis, but surely we have moved beyond these simple attacks now? In a word, no.

We haven't formalized it yet, but brute force works because either the plaintext or the keyspace is finite, it has to be if its on a computer as it has a logical maximum value that it can hold, but more importantly it is small enough that you can simply try every single one until you find the right one. Keyspace in polygraphic ciphers is bounded by the size of the grid, since Hill is the most flexible we'll look at that, there are \(26^{n^{2}}\) matrices of size \(n \times n\). That is not an arbitrary number, 26 is the number of letters you can use in each space, \(n\) is the width and height, its simple area maths, what's more this is an upper bound, as not all matrices have an inverse, and some are inverses of others, so there are less keys than that. This means for our 2x2 key there are \(26^{2^{2}} = 26^{4} = 456,976\) keys, seems like a lot but trivial to a computer, for 3x3 there are \(26^{3^{2}} = 26^{9} = 5,429,503,678,976\) so it grows quickly, but remember so does the complexity of the algorithm itself and this number is still trivial to a computer, at 5x5 it's around \(2.4 \times 10^{35}\) which is approaching half as many digits needed to slow a computer down. So polygraphic ciphers are brute forceable, what we must remember is that while a cipher may prove unbreakable to us, usually we are thinking about using the average equipment available to an ordinary person, and we expect it to be done in no more than a few hours, because we are thinking about CTFs. If I needed to pass a secret military message and used Hill cipher to do it, that would be stupid, because my enemies will have access to supercomputers and datacentres full of specialized cracking equipment, and if it is a top secret document this information could need to remain secret for decades, Hill cipher would not stand a chance against a prolonged attack with specialized equipment.

So what about frequency analysis? Again no, polygraphic ciphers are not immune to this sort of attack. In a monoalphabetic cipher it was easy, just count up the letters and see the fingerprint, in polyalphabetic it was a little harder as we needed to figure out the size of the key and focus on the cycles. Polygraphic throws a spanner in the works here as we are no longer looking at letter distributions since the letters are entangled, but there exist similar fingerprints for digraphs, trigraphs etc. this is information we gather purely to help our understanding of linguistics but each time a pattern emerges and thus frequency analysis continues to be useful. Hopefully you are now beginning to see that the real problem is that letters and words have inherent meaning, and that we cannot step away from these problems without stepping away from the letters themselves. That is a key insight, one that took an important group of some of the worlds best minds until the 1930s-40s to figure out, so we aren't quite ready to walk away from letters just yet, because it took a long time for people to accept that the best way to send secret messages is not to use language at all, and you can probably understand why, its a little counterintuitive. So now lets look at a different way to attack things that became a particular issue with some of these ciphers.

Known Plaintext

The real world is not like our neat little diagram with Alice, Bob and Eve, it is messy, full of unknowns, and humans can be the most unpredictable element of all in that often if you try to predict their behaviour they will break convention purely to spite you. We can't always guarantee that our cryptosystem is perfect, or that we successfully kept everything hidden from the enemy, sometimes it is possible that Eve knows some of the information we are trying to hide, perhaps she has guessed how we think, or maybe she is an insider threat that has access to some of our files, who knows? Our cryptosystems need to remain resilient under these conditions, and so we need to consider the Known Plaintext attack, where the attacker has uncovered some part of the secret message. A brilliant real world example of this is the realization in Bletchley Park that the last couple of words in every message from German High Command was always "Heil Hitler", this was pivotal in the efforts to crack Enigma that we will talk about in the next post, not only did it save them some time by skipping the last few characters, it gave them an example mapping, showing how the machines had been calibrated and thus gave clues as to what the rest of the message might be.

Lets look at an example we've previously used, ciphertext 1 for the Playfair cipher "vfqbonndehbpalpbkgfgpokmvfqbonndehbpalpbdmfwekmaegallfnzkmbhquikabpksygiikdmklfweksygizbgmkrqz". Firstly there are ways to attack this one that as I have seen give you a complete decryption in a matter of minutes, but we will look at those another time. So presented with this string it wouldn't take us a lot of work to conclude its polygraphic, there are tools that will simply tell you with a high degree of confidence, or we would try the attacks for mono and polyalphabetic ciphers then turning up nothing simply start attempting polygraphic attacks where this cipher has some giveaway hints. Once we know that we immediately start analyzing the letters in pairs, which will yield some interesting clues, firstly "vfqbonndehbpalpb" repeats again suggesting similar wording, the pairs "km", "al", "ik", "sy", "gi", "fw'" and "ek" also repeat, and within the repeating section "bp" and "pb" appear very close to one another, this is a very clear sign of the Playfair cipher and indicates the original letters have been swapped, so if I had "elle" you could expect the encrypted version to be something like "yqqy". Only small amounts of letters behave this way meaning we can infer a lot about what the underlying message says purely from the ciphertext. It is not unreasonable to assume that application of linguistic rules would allow an attacker to uncover something like "weshallfightonth??????esweshallfightonth??andi????ou????es????fi????ntthfi????andinthe????????" which is quite a significant amount of the text. If you know the speech one look at that will give you the answer, but even if you didn't, you've already concluded its Playfair, meaning you know how the key works and have quite a few characters to begin constructing it for yourself.

Of course this is why I don't have a general program to help you with this one, the nature of a known plaintext attack is highly dependent on the algorithm in play, so there isn't really a general solution, only a general approach. All the ciphers we have discussed so far are very vulnerable to this sort of attack, and because of the imperfections of the real world and needs for technology to be cross-compatible this kind of attack crops up quite a bit, which is precisely why we need more resilient cryptosystems. The general rule is by having a known plaintext and its corresponding ciphertext you can reverse engineer the key and potentially crack the algorithm, making this a common kind of implementation attack. If I gave you the Caesar cipher of "olssv dvysk" and told you the first word is "hello" it won't take you long to calculate the key of 7. If I gave you the Vigenère cipher of "jeeno pqref" and told you again the first word is "hello", how long do you need to calculate that the key is "cat"? Polygraphics as we have seen while more tricky to reverse engineer are still vulnerable to this, and the Hill cipher particularly so. So hopefully now you can start to understand the importance of good reliable cryptography in a modern unreliable world.